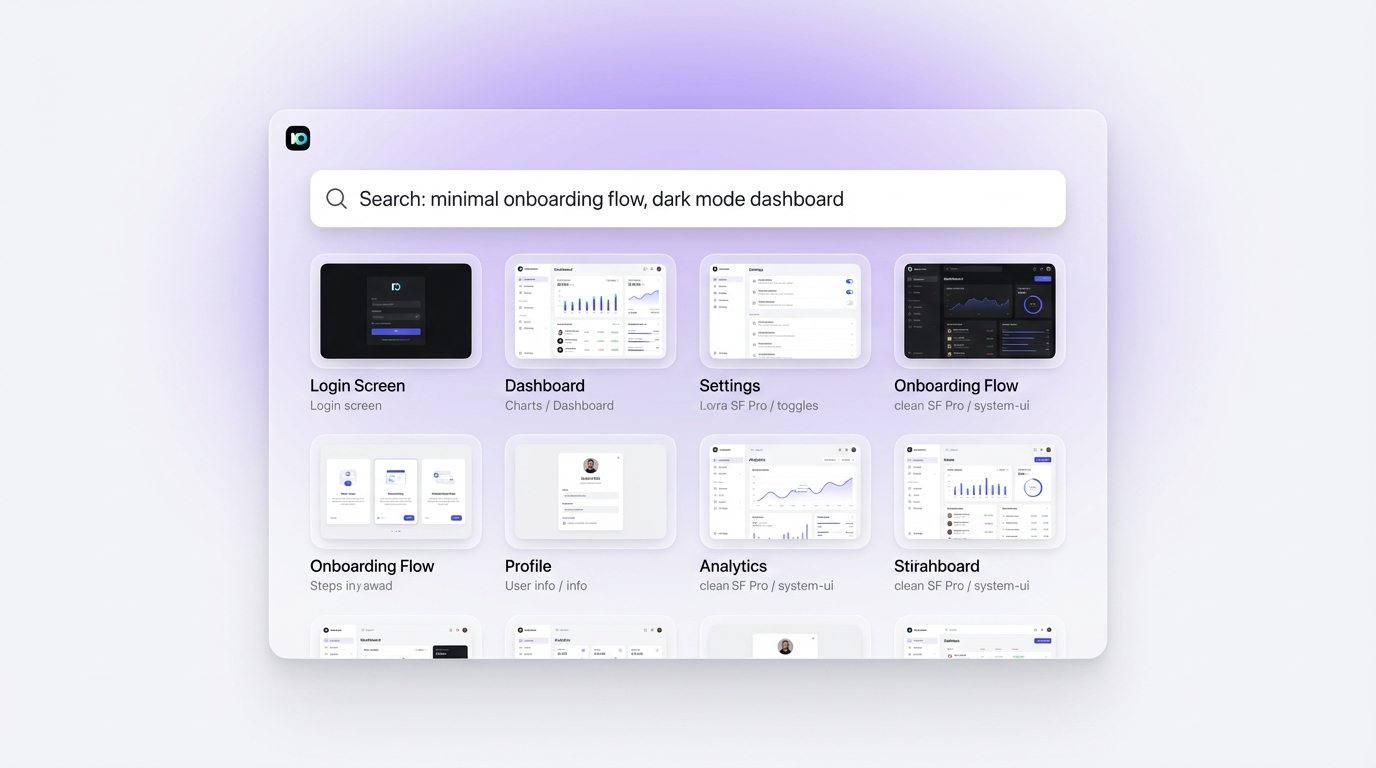

TLDR Natural language search lets designers type queries the way they actually think — "dark SaaS onboarding screen with progress bar" — rather than sift through nested category tags. AI design tools parse intent, context, and visual attributes from plain English, then surface UI references that genuinely match. This guide covers what natural language search means for UI design, how it works under the hood, which tools support it, how it compares to keyword search, and how to write queries that return the most precise results.

Introduction

Every designer knows the problem. You open a design gallery, type "dashboard," and get 40,000 results. Half are irrelevant. The other half require you to click through ten more filters before you find anything close to what you had in mind.

Natural language search changes that. Instead of forcing designers into keyword syntax, it accepts queries that sound like human thought: "minimal fintech app with bottom navigation and a card layout" or "e-commerce checkout flow with trust badges." The AI interprets intent, not just individual words, and returns UI references that match the actual concept.

This shift matters at every stage of the creative process — from early exploration when direction is still vague, to late-stage refinement when you need a specific reference to back a design decision. This guide covers how the technology works, which tools support it, and how to write queries that return the sharpest results.

What is natural language search in the context of UI design?

Natural language search is a method of querying a database or AI system using ordinary conversational phrases rather than precise keywords or structured filters. In the context of UI design, it means a designer can type "a clean login screen for a banking app with a white background and minimal form fields" and receive relevant visual results — instead of needing to know the exact tag or category name the platform uses.

Traditional design galleries organize content through taxonomies: categories, tags, style labels, color filters. Those systems work well for broad exploration but break down when a designer has a specific concept in mind. A query like "onboarding for a meditation app, warm colors, illustrated characters" does not map to a single tag. It requires the system to parse multiple attributes simultaneously.

Natural language search tools use large language models (LLMs) or vector-based semantic search to understand the full meaning of a query. According to Nielsen Norman Group, users form better results-gathering strategies when systems respond to intent rather than exact strings. Applied to design search, this means a designer who types a description of a concept should surface relevant references even when no single keyword aligns perfectly.

The core advantage is cognitive: designers think in sentences, not tags. Aligning the search input with how designers actually think reduces friction and speeds up the inspiration phase significantly. A designer who opens a traditional gallery still needs to translate their mental image into a keyword. A designer who opens a natural language search tool can skip that translation entirely.

How does AI interpret design-related search queries in plain English?

When you type a natural language query into an AI-powered design search tool, the system does not run a simple keyword match. It converts your text into a high-dimensional numeric representation called a vector embedding, then compares that embedding against a library of similarly encoded UI screenshots or design assets.

This process — called semantic search or vector similarity search — allows the system to surface results based on conceptual proximity rather than exact word matches. A query for "fintech dashboard with dark mode" can surface results tagged as "data visualization app" or "crypto wallet interface" if those designs carry the same visual and conceptual DNA.

Figma notes that describing a visual concept in plain English is often more precise than hunting through hierarchical categories — a principle that applies directly to search as well as moodboarding.

The quality of results depends on both the model's training data and the quality of the query. Vague queries like "modern app" return broad results. Specific queries that describe layout, color temperature, user context, and interaction type return far more targeted references. The AI also captures nuance: "a checkout flow that feels trustworthy" implies certain visual attributes — clean spacing, familiar patterns, low-friction forms — even when those attributes appear nowhere in the query text.

Tools trained specifically on design assets, like Inspo AI, are more precise for design queries than general-purpose AI search engines, because their embedding models learn the visual grammar of UI design rather than the statistical patterns of general web text.

How does natural language UI search differ from traditional keyword-based search?

Keyword-based search matches text strings. If you type "blue button," the system finds assets tagged "blue" and "button." It returns exact matches and misses anything described differently by the person who uploaded it. If a designer tagged a component "CTA" instead of "button," keyword search misses it entirely.

Natural language search understands meaning. The same query — typed as "a primary call-to-action in a cool color" — surfaces blue buttons, teal CTAs, navy primary actions, and similar results based on conceptual alignment, not string matching.

According to SparkFabrik, modern AI design tools increasingly use multimodal models that interpret both text descriptions and visual features, allowing designers to mix natural language with visual reference images in a single search pass.

The practical difference shows up in three ways:

Coverage. Natural language search reaches assets labeled differently but visually similar. It removes the dependency on consistent tagging across an entire asset library.

Specificity. A designer who knows exactly what they want can describe it in a sentence and get close results, without clicking through nested filters. The query becomes the filter.

Discovery. Natural language search surfaces unexpected options that match the conceptual intent but sit outside the designer's usual reference pool. This is where genuinely new creative direction tends to emerge — not from browsing familiar sources, but from the AI surfacing references the designer had not considered.

Traditional keyword search still has a place for precise component lookup, such as finding a specific icon or a named pattern. But for inspiration-stage exploration, natural language search returns more valuable results faster.

What kinds of UI patterns can you find using natural language queries?

The range is broad. Effective natural language queries for UI inspiration break into several categories:

Layout patterns. "Sidebar navigation with collapsible sections and dark background" or "full-bleed hero section with centered CTA button" return layout-first references that establish structural direction before any visual styling decisions.

Interaction states. "Empty state illustration for a no-results search page" or "loading skeleton for a card grid" surface micro-pattern references that are hard to find through traditional tag-based search.

Vertical-specific UI. "HealthTech patient portal with appointment cards" or "B2B SaaS pricing table with feature comparison" let designers find industry-relevant references without knowing the exact gallery categories for their sector.

Emotional and tonal attributes. "Playful onboarding flow with bright colors and rounded corners" or "enterprise dashboard that feels calm and professional" give the AI tonal attributes to match against visual tone in the asset library — a type of query that keyword search cannot support at all.

Compound queries. The most powerful queries combine layout, color, interaction, and context: "mobile checkout flow with sticky bottom CTA, warm tones, product thumbnails in cart." Each attribute narrows the result set.

UX Pilot notes that compound descriptive queries produce the most targeted results in AI design tools, particularly when the designer includes both functional context (what the screen does) and visual context (how it should look).

A tool like Inspo AI supports this range of query types because its search index covers 150,000+ design assets across UI patterns, brand references, and component libraries — giving natural language search a rich pool to match against.

What are the best tools for UI inspiration search using AI?

Several tools now offer AI-powered or natural language search for design inspiration. Their strengths vary significantly.

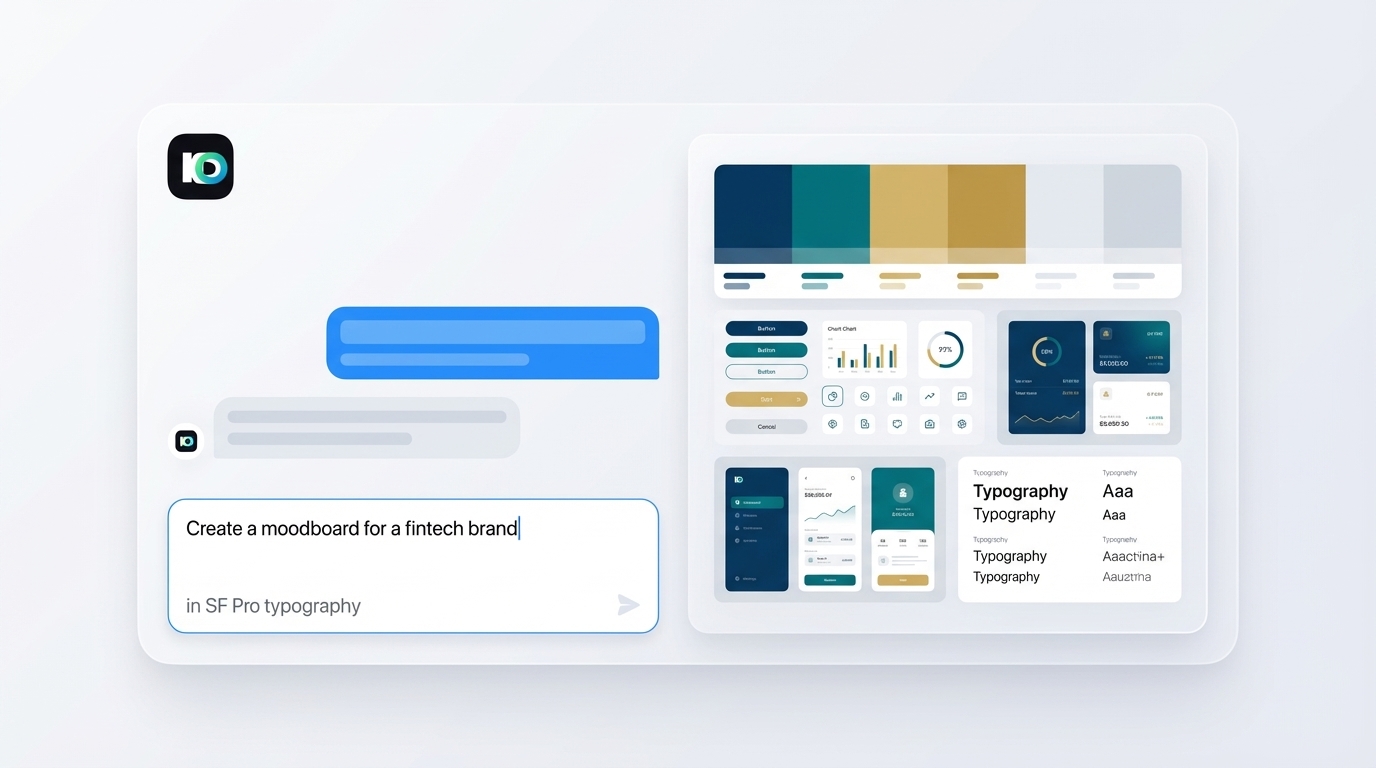

Inspo AI pairs a natural language search engine with a 150,000+ asset library that spans UI screenshots, brand identities, and design systems. The search model trains specifically on design assets, so queries that describe visual intent return precise results. It also connects directly to a moodboard builder, so assets you find go straight onto a working canvas with one click.

Dribbble and Behance use keyword and tag-based discovery with limited semantic search capability. Both are rich in quantity but require manual filtering to isolate relevant references.

Mobbin indexes real app screenshots organized by screen type. Its search supports some natural language filtering, though coverage focuses primarily on mobile-native UI.

Muzli (by InVision) aggregates design inspiration from multiple sources and supports keyword search with editorial curation rather than semantic AI search.

Figma Community is strong for component-level search but relies on creator-applied tags rather than semantic interpretation of content.

SparkFabrik identifies the key differentiator between AI design tools as whether the search model understands design vocabulary specifically, or applies a general-purpose model that treats design queries the same as any text query. Tools trained on design-specific datasets consistently outperform general tools on visual design searches.

How do designers use natural language search to speed up early-stage design?

The early stage of a design project is where direction gets set but nothing is confirmed. Designers collect references, test visual hypotheses, and align with stakeholders on a direction before touching a frame. This phase historically takes hours of manual browsing.

Natural language search compresses it significantly. A product designer starting a fintech mobile app can type four targeted queries — "minimal banking home screen," "fintech card UI with account balance display," "dark mode transaction history list," and "biometric login screen for finance app" — and collect a focused reference board in under 20 minutes.

The speed benefit compounds when teams work together. Instead of a designer sending a vague description to a stakeholder, the team queries the same tool together and agrees on a visual direction based on real references, not abstract language.

Neolemon reports that using AI to accelerate reference collection cuts early-stage design time from five to eight hours down to 15 to 30 minutes for experienced designers who write precise queries.

The workflow improves further when search connects directly to a moodboard. Inspo AI combines its search layer with a built-in moodboard builder, so results go directly onto a working canvas with one click — no copy-paste, no tab-switching.

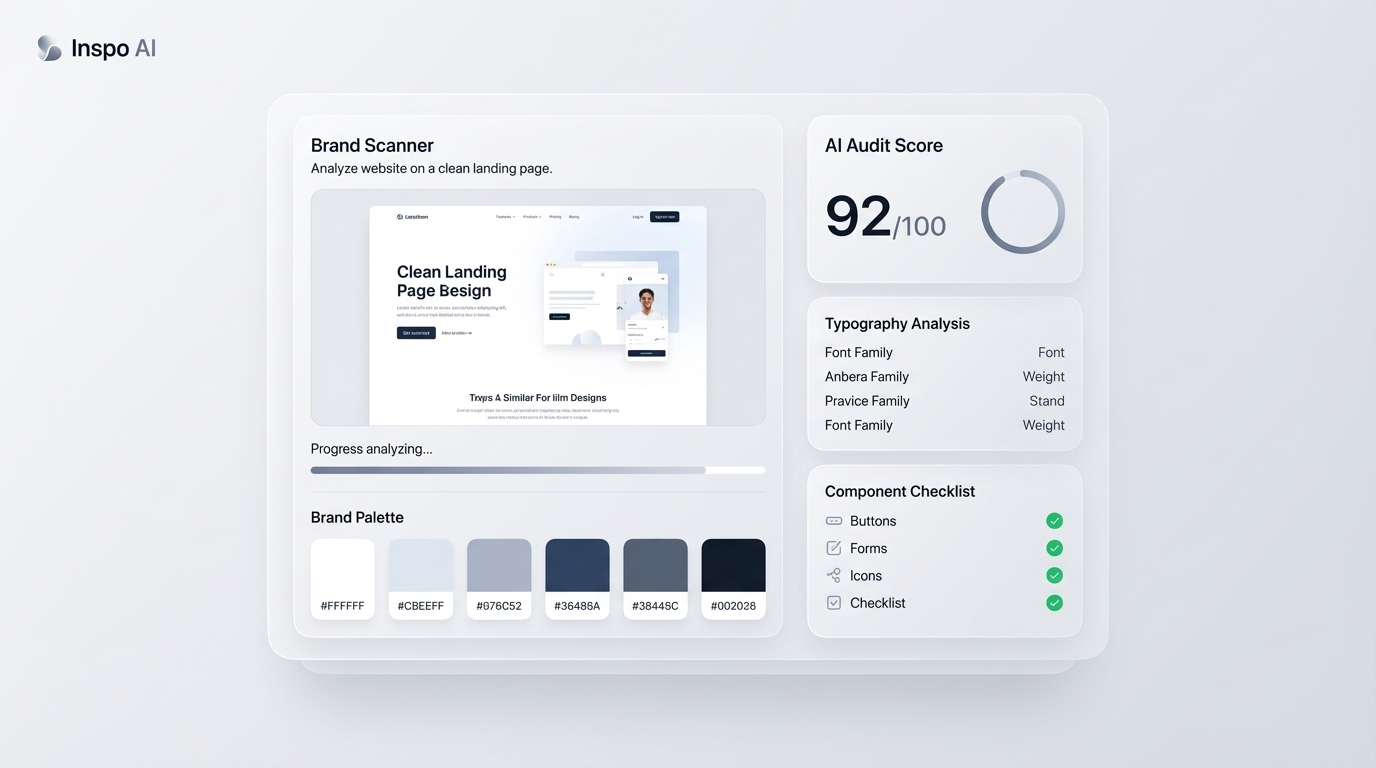

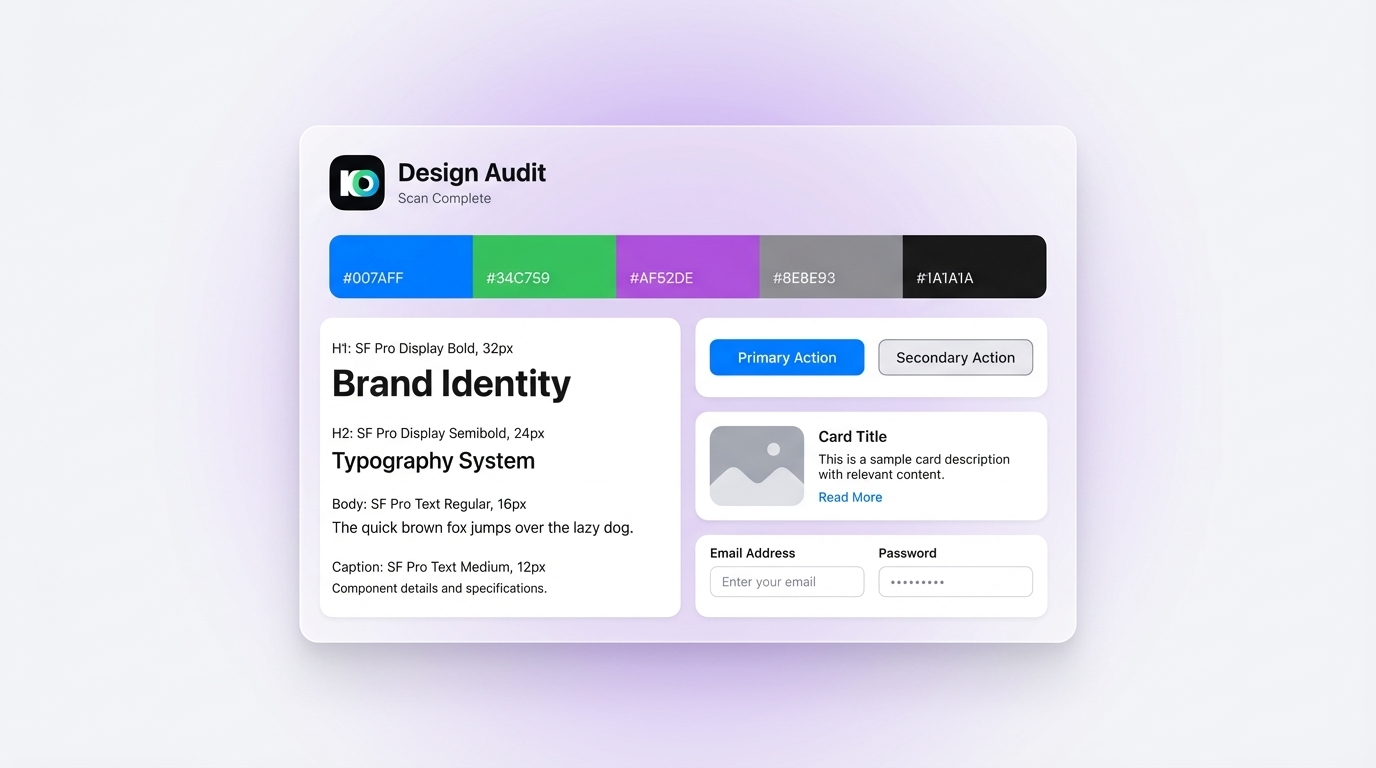

Inspo AI brand scanner and design audit interface — design consistency and pattern analysis">

Inspo AI brand scanner and design audit interface — design consistency and pattern analysis">

Is natural language search for design inspiration accurate enough for professional work?

Yes, with one important caveat: the quality of results depends directly on query quality. Vague or under-specified queries return broad results. Specific, multi-attribute queries return highly targeted references.

Nielsen Norman Group found that users achieve better search outcomes when they articulate intent clearly — a finding that applies directly to natural language design search. A query that names the platform (mobile vs. web), the industry vertical, the color temperature, the interaction context, and the desired emotional tone gives the AI enough signal to return professional-quality references.

For professional design work, natural language search works best as a first pass. It surfaces a wide reference pool quickly. The designer then curates from that pool rather than starting from scratch.

The limitation is that AI search sometimes surfaces visually similar assets that are functionally different. A query for "settings screen" might return profile pages that look similar but serve a different purpose. Designers need to apply their own judgment to evaluate functional fit, not just visual match.

For teams that maintain consistent visual standards, combining natural language search with a brand scanner adds an extra layer of precision. Inspo AI offers a Brand Scanner alongside search, so designers can verify that selected references align with their existing brand system before pinning them to a moodboard.

Start Finding UI Inspiration the Smarter Way

Natural language search removes the single biggest friction point in the design inspiration phase: the gap between how designers think and how traditional tools are organized. When you can describe a concept in a sentence and receive precise UI references, the early stage of design becomes faster, more collaborative, and better grounded in real visual precedent.

The key to sharp results is specificity. Name the platform, the industry, the color temperature, the interaction type, and the emotional register. The more attributes you include, the sharper the results.

If you want to put this into practice today, Inspo AI gives you a 150,000+ asset library with AI-powered semantic search, a connected moodboard builder, and a Brand Scanner to check consistency. Plans start at $5 per month. Start free and build your first reference board in under 20 minutes.