TLDR AI chat design tools let designers describe a visual concept in plain language and receive a visual output within seconds. Understanding how to write strong prompts, choose the right tool for the output type, and integrate AI into a real design workflow is what separates designers who get mediocre results from those who get work worth shipping.

Introduction

The gap between a design idea and a visual artifact used to require hours of production work. Today, that gap closes in seconds. AI chat design tools accept a plain language description and return images, UI layouts, moodboards, or color systems with no manual rendering required.

For designers, brand teams, and agencies, this shift changes how creative work starts. The challenge has moved from "how do I produce this?" to "how do I describe what I want clearly enough to get a useful result?"

Let's Enhance's 2026 guide to AI image prompting notes that effective prompting is now model-specific: "ChatGPT works best with paragraphs and multi-turn edits; Midjourney V7 prefers short, high-signal phrases." That specificity matters. The designers who get the best results understand both the tool and the language it responds to.

This article covers what AI chat design tools are, how they work, how to prompt them effectively, and how to build them into a real design workflow.

What Is an AI Chat Design Tool?

An AI chat design tool is a software interface that accepts a text prompt or a conversation and returns a visual output. The output type depends on the tool: it might be an AI-generated image, a UI layout, a brand color palette, a moodboard, or a design variation based on a reference image.

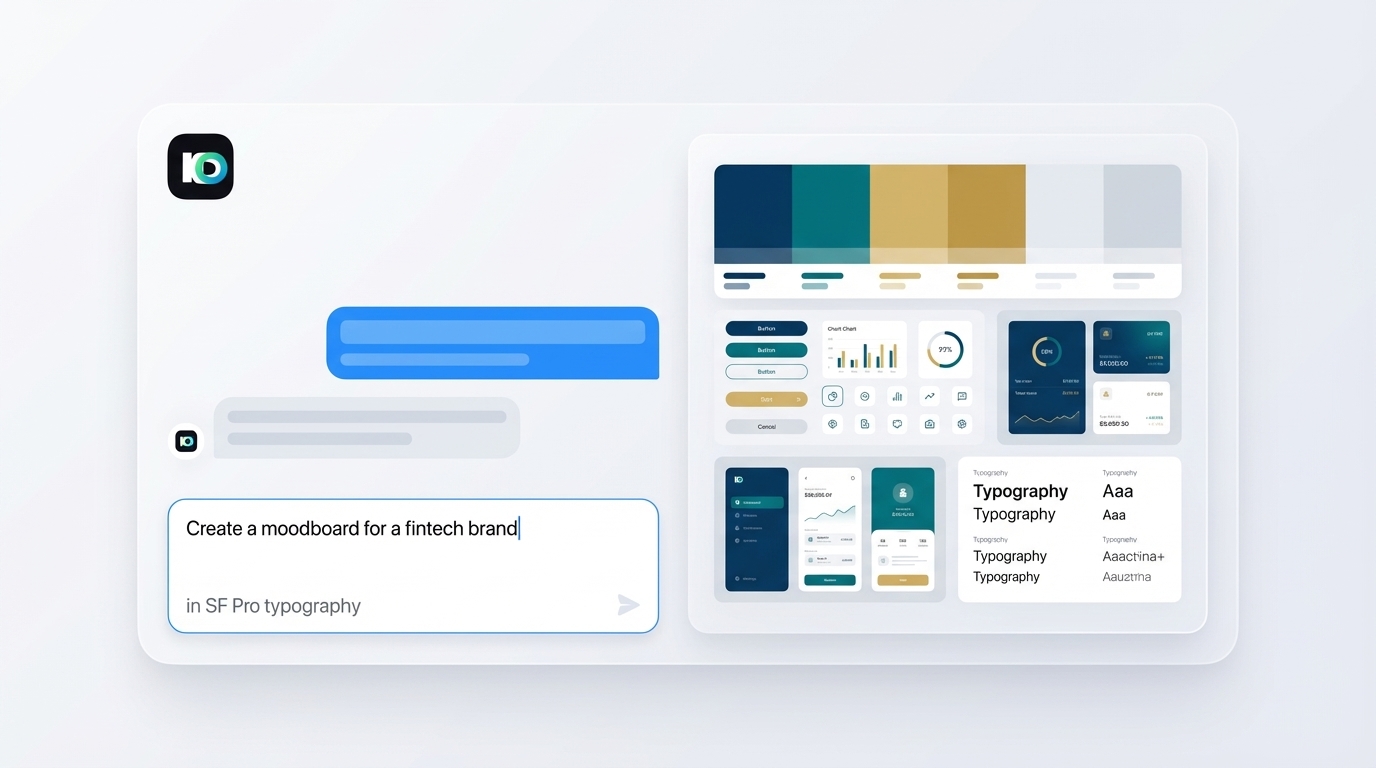

The "chat" component reflects the conversational interface. Unlike traditional design software, where a designer works with visual controls such as layers, brushes, and grids, a chat design tool uses natural language. The designer types what they want, and the tool generates a result. When the result is close but not right, the designer refines it with a follow-up message.

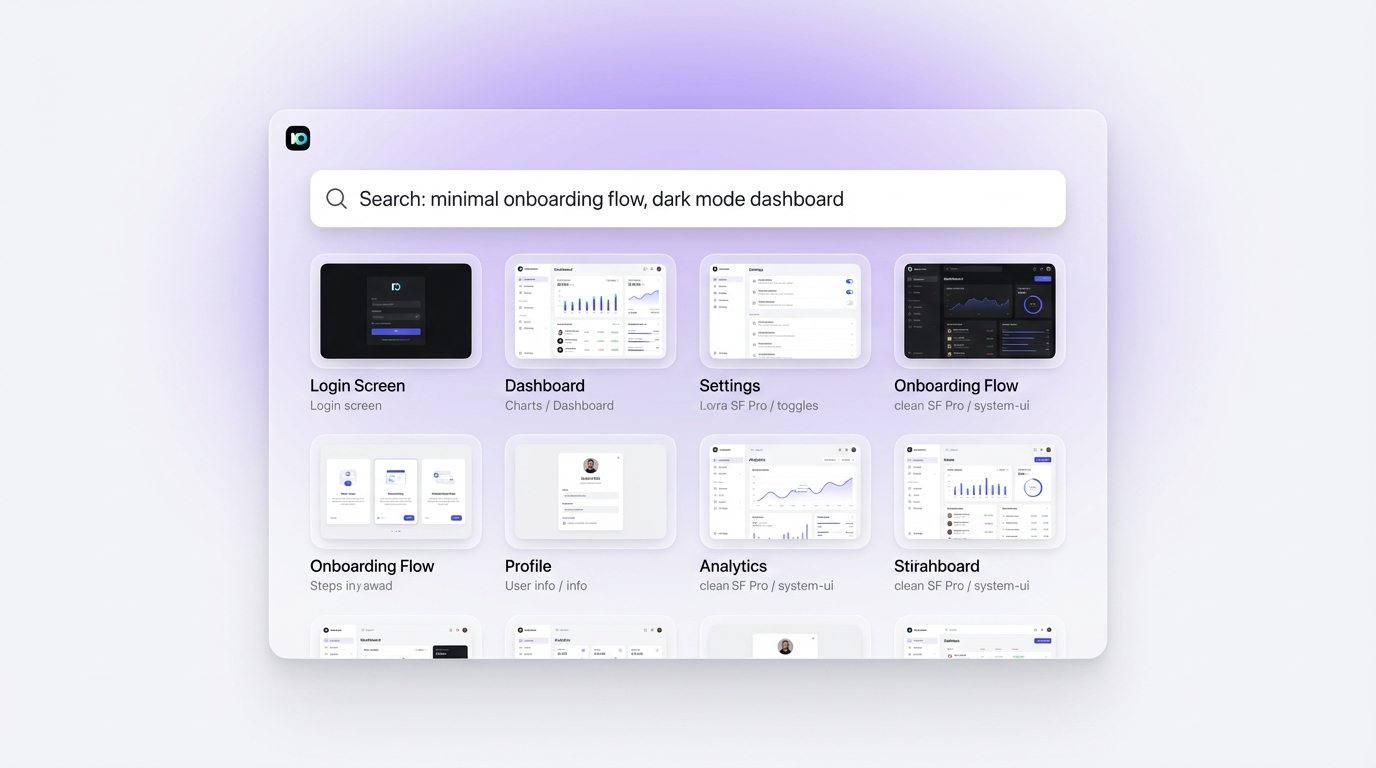

Tools vary significantly in what they accept and return. Midjourney and Adobe Firefly specialize in image generation. Figma AI handles UI layout suggestions within an existing design file. Inspo AI takes a different approach, combining AI-powered design search with moodboard building and brand analysis so designers can find curated references and generate inspiration boards through a single interface. That combination of search and generation is especially useful for designers who want AI assistance grounded in real-world examples rather than algorithmically invented aesthetics.

Leonardo.Ai's prompting guide describes the core challenge well: "Sometimes you have a clear vision for an image, but the AI model returns something generic or very far from what you have in mind." The solution is systematic prompting, not luck.

How Do You Write Effective Text Prompts for AI Design?

Strong prompts follow a consistent structure: subject, style, mood, lighting, and context. Each detail adds signal for the model to work from and narrows the range of wrong directions it can go.

A weak prompt looks like: "A logo for a tech startup."

A strong prompt looks like: "A minimal wordmark logo for a fintech startup. Clean sans-serif type. Dark navy and off-white palette. Corporate, trustworthy tone. No illustrations. Visual language similar to Stripe or Wise."

The difference is specificity. The second prompt defines the visual category (wordmark), the aesthetic (minimal, clean), the palette (dark navy and off-white), the mood (corporate, trustworthy), the constraints (no illustrations), and the reference aesthetic. Every element gives the model a constraint to work within.

Let's Enhance's 2026 prompting guide recommends adding "4 to 6 high-signal details: medium, style, lighting, framing, mood, palette" and including a reference image when brand likeness matters. Reference images are particularly powerful because they give the model a visual anchor that text alone cannot fully provide.

For UI and product design prompts, include layout type (card grid, sidebar, full-bleed hero), device context (mobile, desktop, tablet), and interaction state (default, hover, filled form). The more the prompt reads like a design brief, the closer the output comes to what you need on the first pass.

What Is the Best AI Chat Tool for Converting Text to Visual Output?

The answer depends on what kind of visual output you need. Different tools specialize in different output types, and choosing the wrong one leads to poor results regardless of prompt quality.

For photorealistic images and artistic compositions, Midjourney V7 and Adobe Firefly lead the category. Midjourney excels at mood and aesthetic exploration. Firefly integrates directly into Adobe products and trains only on licensed content, which matters for agency and brand work where commercial rights are a concern.

For UI and product design layouts, Figma AI generates wireframes and layout suggestions within a live Figma file. Galileo AI produces full UI screens from text descriptions and exports directly to Figma, making it useful early in the product design process.

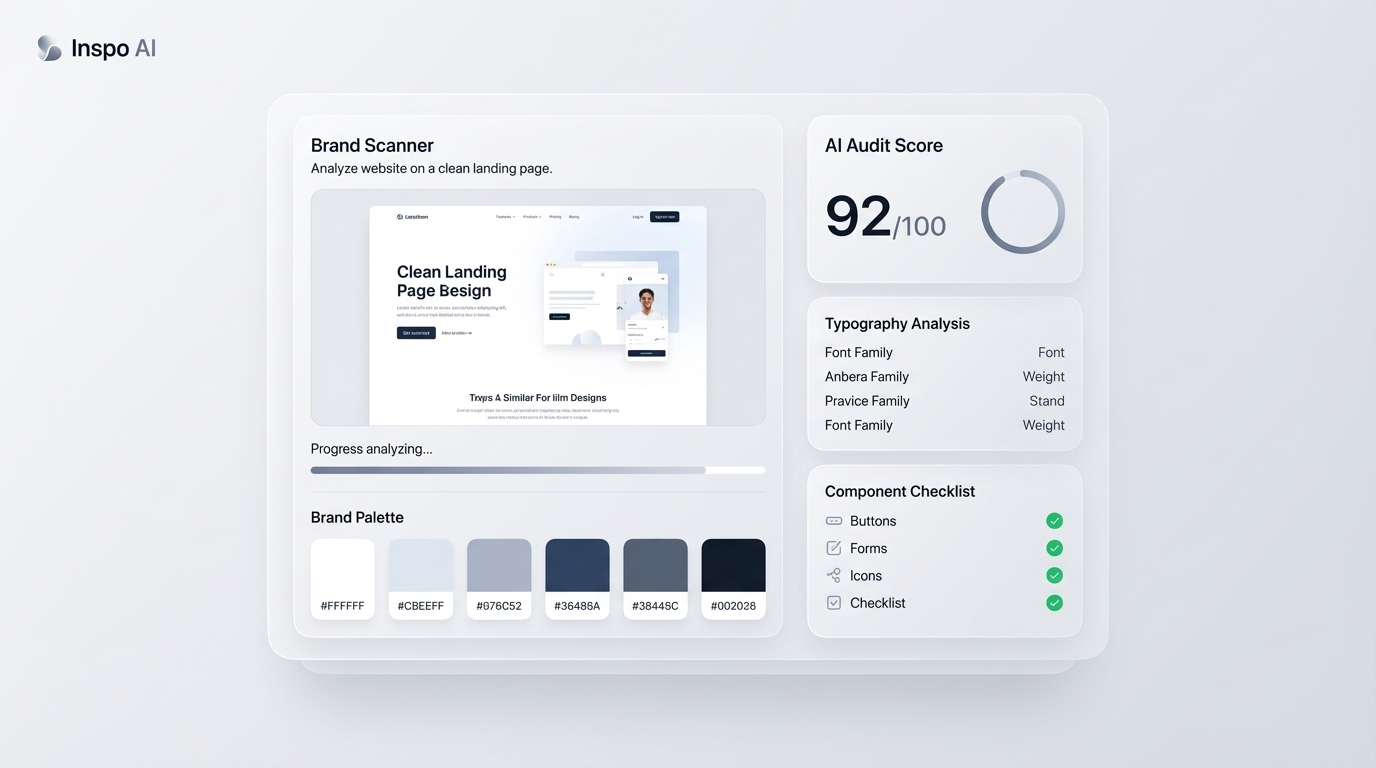

For design research and moodboarding, Inspo AI offers something distinct: an AI-powered search engine that understands visual style combined with a moodboard builder and creator studio. With 150,000+ curated design assets and a 4.2 Trustpilot rating from 180+ teams, it is built for daily professional use, offering designers real-world curated references rather than synthetically generated images as a foundation for project work.

Leonardo.Ai remains a strong general-purpose option, offering fine control over generation parameters and a library of style presets suited to product and brand work.

How Does AI Turn a Text Prompt Into an Image?

The technical process behind AI image generation is a form of machine learning called diffusion. The model starts with random visual noise and uses the text prompt to guide a gradual refinement process. At each step, the model evaluates whether the current image matches the description. Aspects that do not match get adjusted. The process repeats hundreds of times until the image converges on a result that fits the prompt.

Under the hood, the model converts the text prompt into a numerical representation called an embedding, using a language model similar to GPT. That embedding defines a region in the model's learned visual space. The diffusion process then generates an image that corresponds to that region.

Newo.ai's explanation of AI image generation describes the experience from the user's side: "Just a few lines of text to the image generator. An algorithm trained on an extensive set of images can create it in seconds."

The training data matters significantly. Models trained on broad internet image sets have a wide aesthetic range but may produce generic results for niche visual styles. Models trained on domain-specific data produce more consistent results within that domain. For design-specific work, tools trained on curated design references tend to produce outputs that align more closely with professional visual standards.

Can AI Design Tools Understand Complex Creative Briefs?

AI design tools produce better outputs from complex briefs when the brief is structured as a good prompt. A two-sentence prompt produces a two-sentence interpretation. A detailed brief that defines the audience, tone, visual references, constraints, and deliverable produces a significantly more useful result.

The models do not "understand" a brief in the way a human designer reads between the lines. They recognize patterns in language and map them to trained visual associations. That distinction matters in practice. Phrases like "confident but approachable" or "premium without being cold" are interpretable by a human designer who has worked with many brands. For an AI model, those phrases need translation into specific visual signals: color temperature, font weight, spacing density, and photographic style.

A 2025 Medium analysis of visual design prompting notes that designers get the best results when they treat prompting as a skill to develop rather than a one-shot process. Iteration is built into the workflow. A first output gives you something to react to, and that reaction informs the refined prompt.

For complex brand briefs, AI tools work best as a starting point rather than a final output. They generate options fast. Designers then apply judgment to select, combine, and refine until the work meets the strategic standard the brief requires.

What Is the Difference Between AI Image Generation and AI Design Tools?

AI image generation and AI design tools overlap in some functions but serve different purposes.

AI image generation tools (Midjourney, Firefly, DALL-E, Stable Diffusion) take a text or image input and produce a new image. The output is a static visual file. These tools are excellent for concept exploration, campaign imagery, illustration, and atmosphere references.

AI design tools are broader in scope. They handle image generation but also include brand analysis, layout suggestions, moodboard assembly, design audit, asset organization, and workflow integration. The design context matters to them: they account for the fact that a color palette needs to work in context, that a UI layout needs to meet accessibility standards, and that a brand asset needs consistency across touchpoints.

Figma's 2025 roundup of AI design tools describes the distinction: tools like Figma AI, Galileo, and Framer AI embed AI into the design process rather than producing standalone image outputs. They understand design structure, not just visual aesthetics.

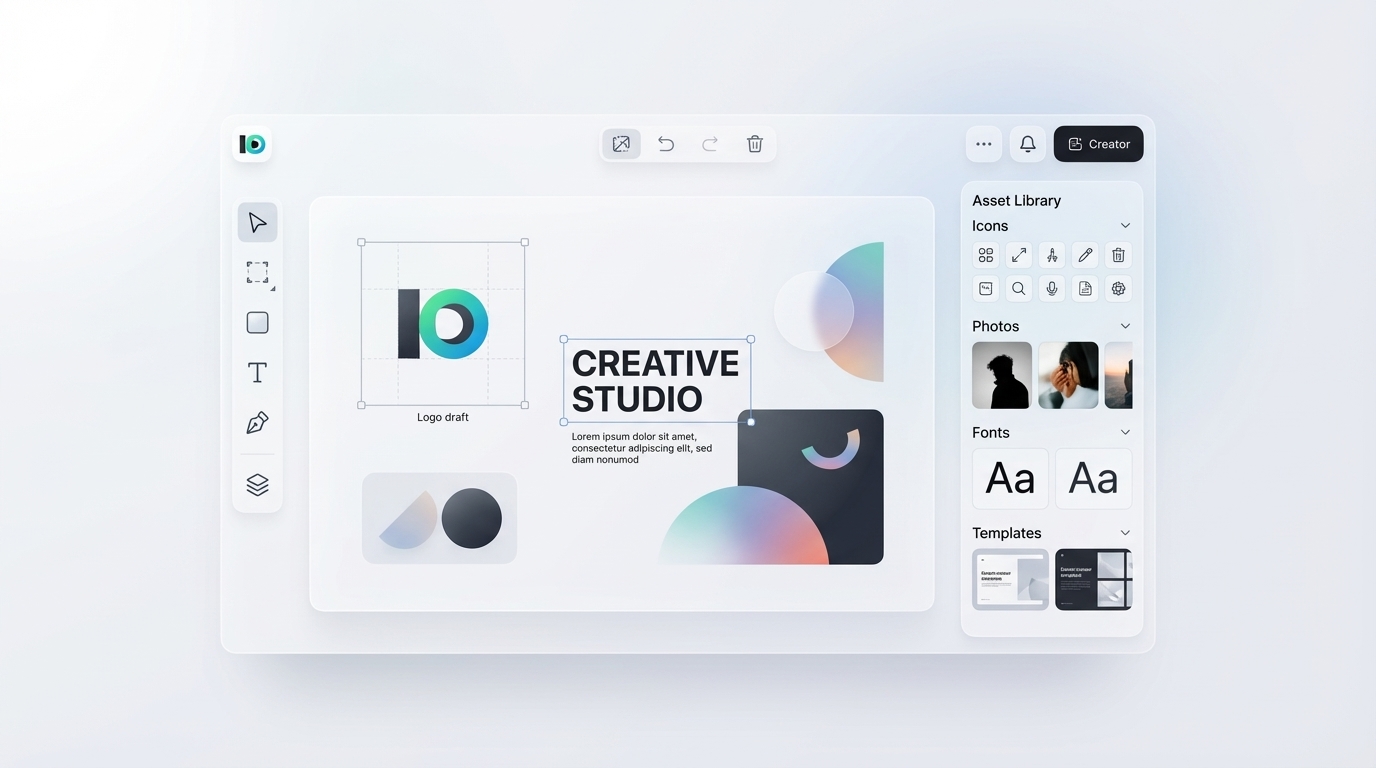

Inspo AI sits firmly in the AI design tools category. Its features, including AI design search, moodboard builder, design audit, brand scanner, and creator studio, work together as a design intelligence layer rather than a standalone image generator. That integration is what makes it useful as a daily professional tool rather than a novelty.

Inspo AI creator studio with canvas, toolbar, and asset library panels">

Inspo AI creator studio with canvas, toolbar, and asset library panels">

How Do Designers Integrate AI Chat Tools Into Their Daily Workflow?

The most effective integration treats AI chat tools as a first-pass research layer and a rapid iteration engine, not a replacement for design judgment.

In practice, a working designer's AI-assisted workflow might look like this: a brief arrives, the designer uses an AI-powered design search tool to pull reference aesthetics relevant to the project, builds a rough moodboard in minutes, then uses a generation tool to produce five to ten rough visual directions. Those directions go into a review with the creative director or client. Feedback sharpens the prompt for the next round.

Builder.io's 2026 guide to AI design tools notes that AI tools help designers work faster "by taking care of repetitive tasks and generating new ideas. They can turn plain text into layouts, convert mockups into code, and automate routine work like background removal or color palette generation."

The key to workflow integration is removing friction at the point of creative ambiguity. The hardest moment in any design project is the blank canvas at the start. AI tools eliminate that blank canvas by generating a starting point quickly. The designer's job then shifts to evaluation and direction, which are the highest-value parts of the work.

For teams, AI chat tools also reduce the cognitive load of onboarding new designers onto a project. A well-structured prompt that captures the brand parameters and project goals gives a new team member instant orientation. The AI output becomes a working brief that shows rather than tells, cutting alignment time significantly.

Start Faster, Design Better

AI chat design tools have permanently changed the starting point of creative work. The designers and teams who get the most from them treat prompt writing as a craft, choose tools matched to the output type they need, and use AI as a research and iteration engine rather than a final output generator.

Inspo AI gives designers and brand teams a dedicated AI-powered workspace for design research, moodboarding, brand scanning, and creative production. With 150,000+ curated design assets and plans from $5 per month, it is the fastest way to move from a brief to a creative direction worth pursuing.