A practical guide to generating UI designs with AI chat in 2025, covering which tools produce the most usable outputs, how to write prompts that drive real design value, and how AI-generated UI fits into a professional design process.

TLDR: Generating UI designs with AI chat works best as a concept exploration and iteration tool, not a production design shortcut. The best AI UI chat tools in 2025 (v0.dev, Galileo AI, UX Pilot, InspoAI Creator Studio) each optimize for different output types and design stages. Understanding where each fits prevents the most common failure mode: using an AI UI tool at the wrong stage of the design process.

Table of Contents

- What does "AI generate UI design" actually mean in 2025?

- Which AI chat tools can generate usable UI designs?

- How do you write prompts that produce good AI UI outputs?

- What is the quality ceiling of AI-generated UI design?

- How does AI-generated UI compare to designer-produced wireframes?

- What is the right role for AI UI generation in a professional design process?

- How do you iterate on an AI-generated UI using chat?

- Conclusion

Introduction

The ability to describe a user interface in plain language and receive a working visual layout has moved from research demo to everyday design tool in two years. The question in 2025 is no longer "can AI generate UI?" but "where does AI-generated UI actually add value in a real design process, and where does it mislead?"

Designers who have used AI UI generation in production workflows consistently report the same finding: AI-generated UI is exceptional for concept exploration and stakeholder communication, and risky when treated as a production starting point. The outputs look complete. They often are not.

This guide covers what AI chat UI generation actually produces, which tools are worth using, and how to incorporate AI generation into a design workflow that still produces excellent production results.

What does "AI generate UI design" actually mean in 2025?

"AI generate UI design" covers three distinct output types with very different quality profiles:

1. Code-based UI generation: Tools like v0.dev (Vercel) and Bolt.new generate functional front-end code (React, HTML/CSS) from natural language descriptions. The output is a live, interactive UI component. Quality varies widely with prompt specificity. Best use: rapid prototyping and MVP scaffolding.

2. Visual wireframe/mockup generation: Tools like Galileo AI, Uizard, and UX Pilot generate Figma-importable wireframes from text descriptions. The output is a visual mockup with placeholder content and approximate layout structure. Best use: early concept communication.

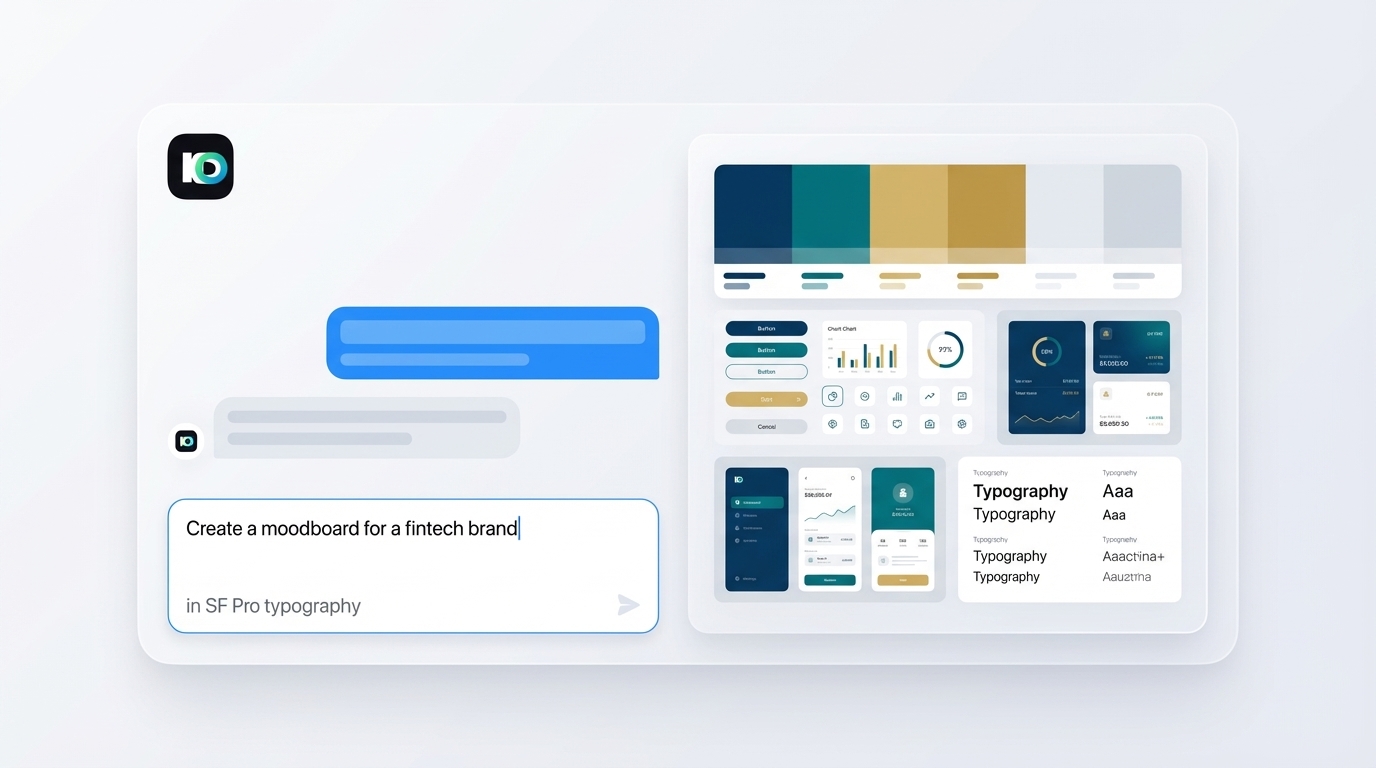

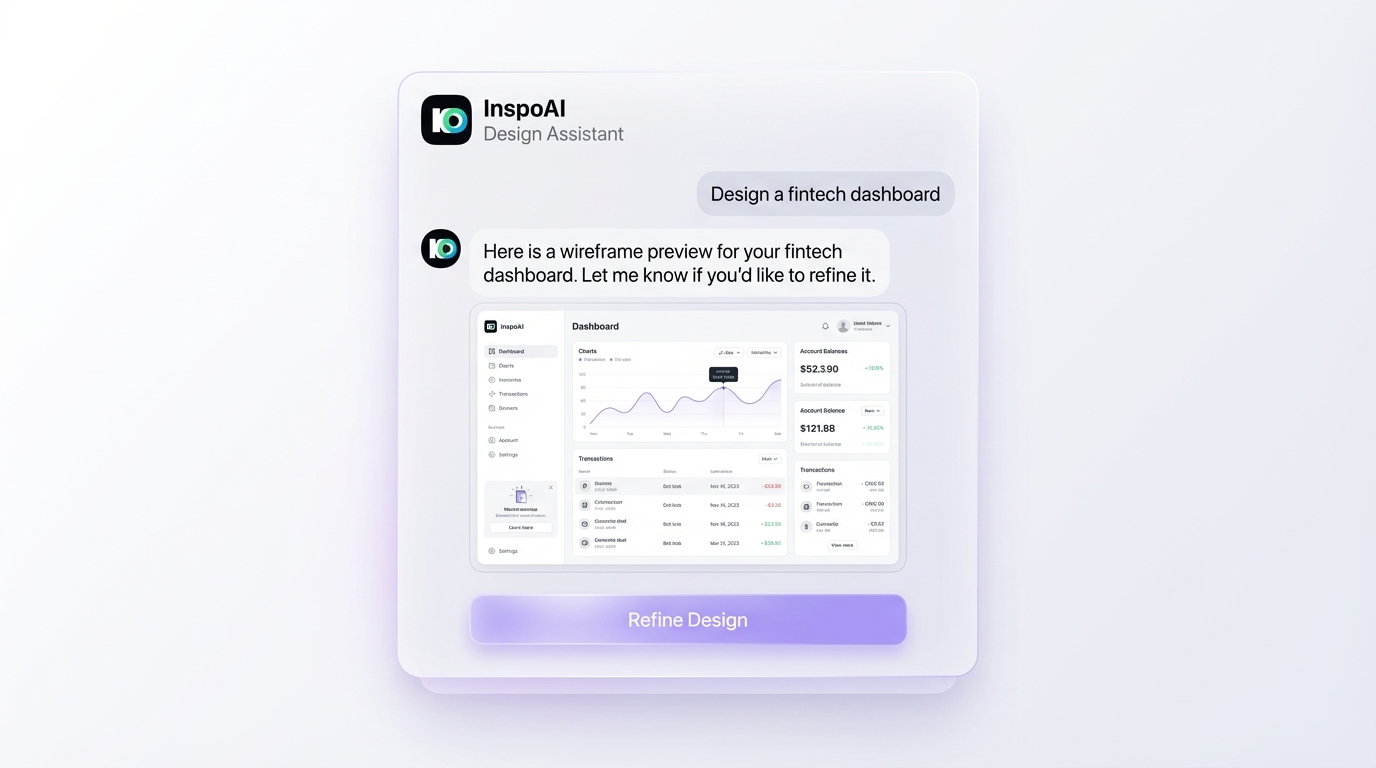

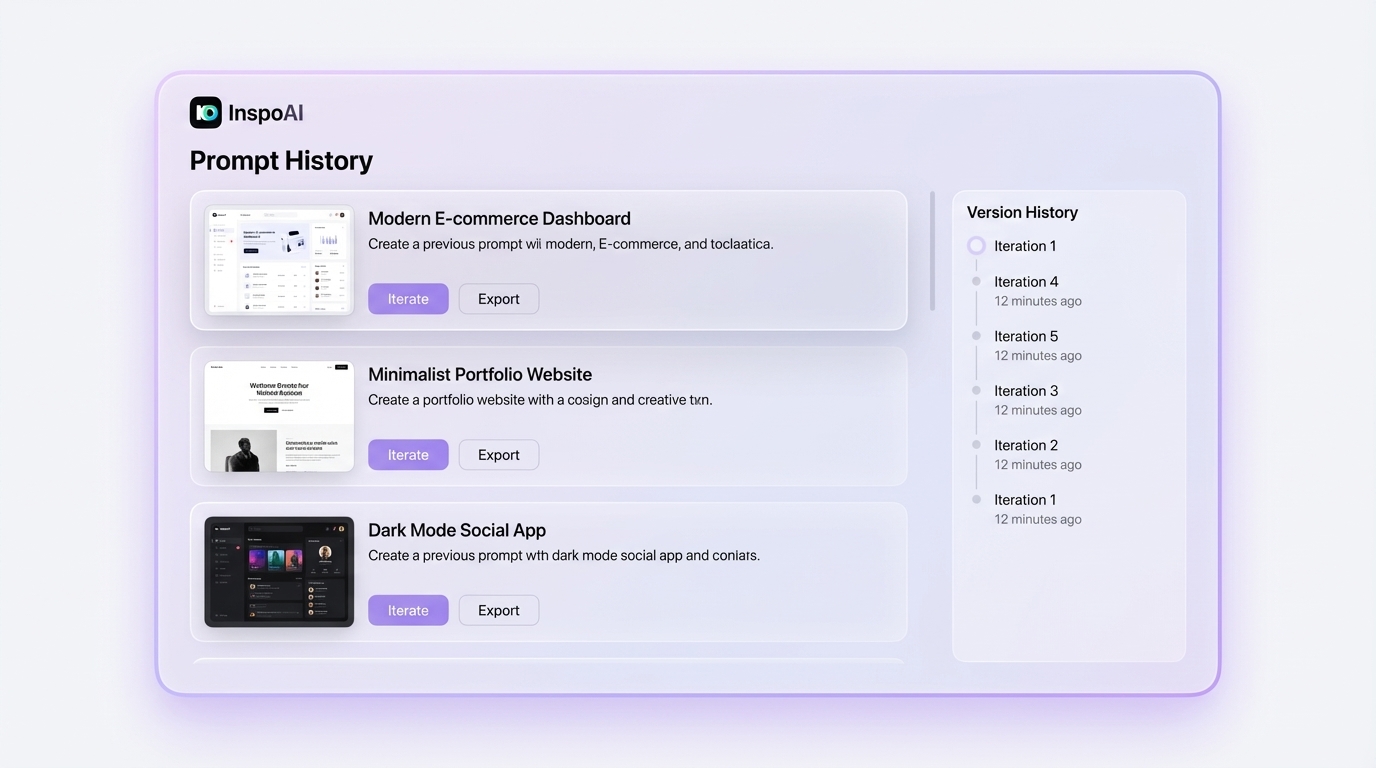

3. Design direction and aesthetic generation: Tools like InspoAI Creator Studio generate visual design direction outputs: color systems, typography pairings, mood visuals, and brand concept explorations. Not functional UI code, but high-quality visual reference for design system development. Best use: stakeholder alignment and design direction exploration.

Each category is useful. Conflating them leads to the common mistake of using a visual wireframe generator expecting code output, or using a code generator expecting production-quality visual design. Source: Medium - Top AI UI Generators 2025

Which AI chat tools can generate usable UI designs?

v0.dev (Vercel): The strongest tool for code-based UI generation. Generates React components from natural language prompts with surprisingly clean code output. Iterates well through chat. Produces functional components you can run immediately. Best for developers who think in component terms.

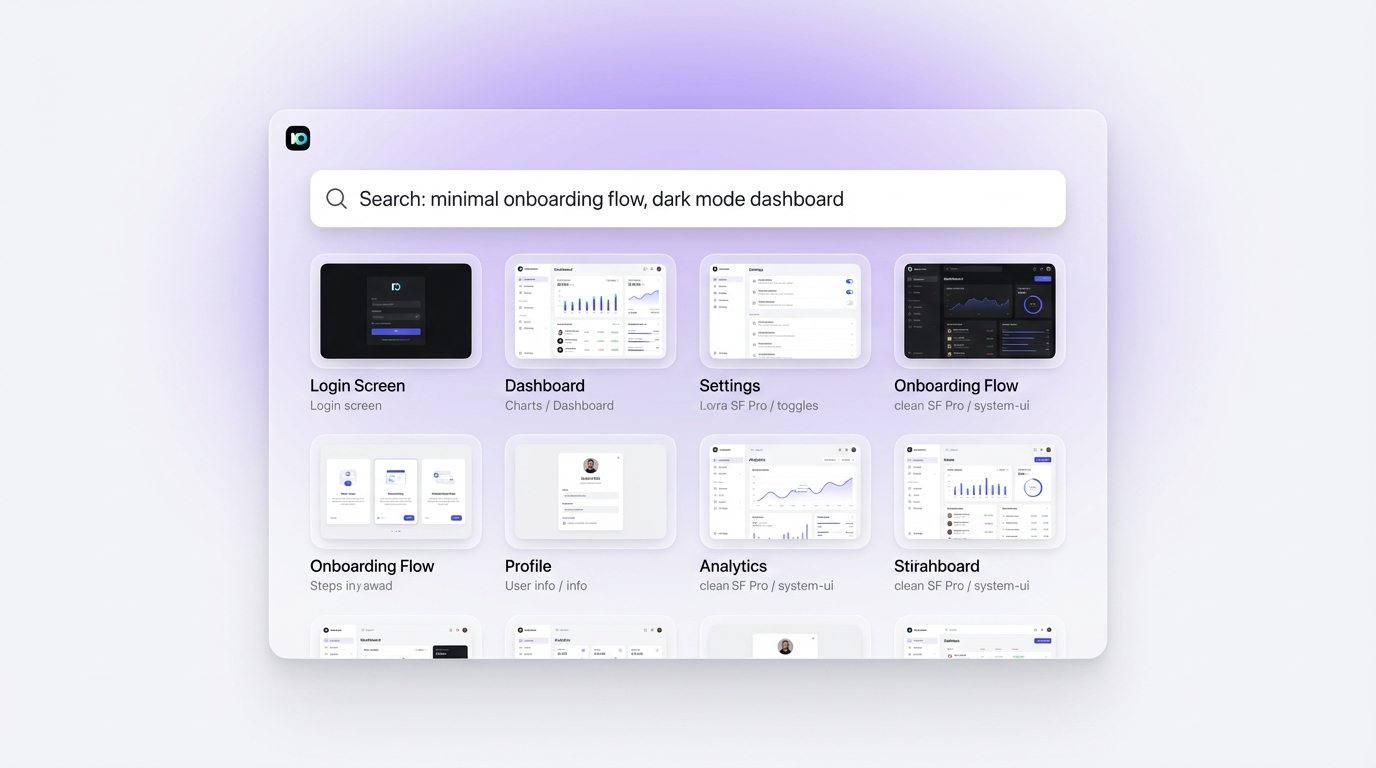

Galileo AI: Generates Figma-importable wireframes from text. Good at multi-screen app flows. Weaker at design system consistency across screens. Imports to Figma via plugin.

UX Pilot: Chat-based wireframe generation focused on UX flow and screen structure. Better than Galileo at maintaining navigation consistency across generated screens. Less strong on visual design quality.

Uizard: Drag-and-drop plus AI wireframe generation. Good for non-designers who need functional prototypes quickly. Not optimized for professional UX design workflows.

Figma AI (Make): Figma's native AI generation tool generates components and layouts directly in Figma. Tight integration is the main advantage. Output quality is competitive but constrained to Figma's existing component ecosystem.

InspoAI Creator Studio: Specializes in visual design direction and brand concept generation rather than wireframe output. Strongest for aesthetic exploration and moodboard-ready design assets. Best when paired with a wireframe tool for production workflows. Source: Prototypr

How do you write prompts that produce good AI UI outputs?

Effective AI UI prompts specify five parameters: product type, user context, visual style, constraints, and fidelity.

Product type: Be specific. Not "an app" but "a mobile fintech app for tracking personal investments, iOS native design language."

User context: Describe the user and their goal. "A first-time investor reviewing their portfolio performance for the month" gives the AI context to select appropriate information hierarchy and layout density.

Visual style: Reference established aesthetics. "Material You design language with purple accent" or "minimal SaaS, lots of white space, Inter font, inspired by Linear.app" produce far better results than "modern and clean."

Constraints: List must-haves. "Must include a data visualization component," "must have a bottom navigation bar," "must work at 390px width."

Fidelity: State the purpose. "Low-fidelity wireframe for stakeholder discussion" produces a different output than "high-fidelity visual mockup for client presentation." Mismatching fidelity requests to tool capability is a common source of disappointment. Source: Reddit - Building UIs with AI

What is the quality ceiling of AI-generated UI design?

AI-generated UI has a consistent quality ceiling in 2025: it produces plausible, not optimal, design decisions.

A plausible layout uses standard conventions correctly: navigation at the top or bottom, primary action buttons in expected positions, content structured in a readable hierarchy. AI UI generation is now reliably good at this level.

Optimal design decisions require user research context, brand strategy alignment, accessibility testing, and iterative refinement based on actual usage data. AI has no access to any of these. A generated UI does not know that your users are primarily power users who need density over spaciousness, that your brand strategy calls for premium whitespace, or that a previous version of this screen showed high abandonment at the form step.

The implication for professional designers: AI-generated UI is a starting point with a known quality ceiling. It gets you to "plausible" in minutes. Getting from "plausible" to "optimal" still requires human design judgment, user testing, and iterative refinement. The AI generation step replaces the blank canvas problem; it does not replace the design process.

This ceiling is higher than it was two years ago and will continue rising. But understanding the current ceiling prevents the credibility risk of shipping AI-generated UI that has not been refined through proper design process. Source: Designlab

How does AI-generated UI compare to designer-produced wireframes?

For speed at the concept exploration stage, AI-generated UI wins. A designer can generate ten layout variations of a dashboard concept in 30 minutes using AI chat. Producing ten hand-crafted wireframe variations in the same time is not possible.

For quality at the production stage, designer-produced wireframes win. A skilled designer's wireframe reflects specific UX research, navigation principles, accessibility considerations, and business constraints that AI cannot infer from a text prompt.

The practical recommendation from Prototypr's 2025 AI UI tool test: use AI-generated UI for early concept validation with stakeholders, then use the validated direction to guide designer-produced wireframes. This uses AI at the stage where it adds the most value (speed at low fidelity) and keeps humans in control at the stage where quality matters most (production fidelity).

A specific workflow that works well: generate five AI UI variations, review with stakeholders to identify which direction resonates, then use InspoAI Creator Studio to refine the visual direction into a high-quality design reference that the production Figma work follows.

What is the right role for AI UI generation in a professional design process?

AI UI generation belongs at the top of the design funnel: concept exploration and stakeholder alignment, not production design.

The five moments where AI UI generation adds clear professional value:

1. Client brief response: Generate five direction explorations to present alongside a brief response, giving clients something concrete to react to before any production design begins.

2. Internal design review kickoff: Start a design sprint by generating a set of AI explorations that the team critiques and uses to establish shared direction, rather than each designer independently interpreting the brief.

3. Competitor feature parity mapping: Generate AI mockups of features that competitive products have and your client's product does not, for gap analysis presentations.

4. Rapid hypothesis testing: Generate a UI variation that addresses a specific UX hypothesis in minutes, get quick feedback from stakeholders, and decide whether it is worth investing in a proper design iteration.

5. Non-designer stakeholder communication: When engineering or business stakeholders need to understand what a planned UI feature looks like before design work begins, a generated AI mockup communicates shape and scope more effectively than a written description.

None of these roles involve AI UI generation replacing production design work. They all involve AI generation accelerating the pre-production phases. Source: Muzli

How do you iterate on an AI-generated UI using chat?

Effective chat iteration on AI-generated UI follows an additive refinement pattern rather than a wholesale regeneration pattern.

Step 1: Generate an initial UI from a detailed prompt. Evaluate the output for three things: layout structure, visual hierarchy, and visual style. Note which of the three needs the most improvement.

Step 2: Iterate on the weakest dimension first. "Keep the overall layout but make the typography hierarchy stronger by increasing the contrast between headline and body sizes" is more effective than "make it look better."

Step 3: Lock in what works. Before changing visual style, confirm that layout and hierarchy are correct. Regenerating with a new style on an incorrect layout produces a different incorrect layout.

Step 4: Add constraints progressively. Do not try to specify everything in the first prompt. Add constraints as the generation converges toward the target direction.

Step 5: Reference your inspiration library.** Prompting with "similar to the card UI I have in my InspoAI collection tagged 'dark SaaS'" produces more precise direction than a purely verbal description. Combining an organized design inspiration library with AI generation is the highest-leverage design exploration workflow in 2025.

Conclusion

AI chat UI generation is a legitimate professional tool when used at the right stage of the design process. It accelerates concept exploration, improves stakeholder communication, and eliminates blank canvas syndrome. It does not replace UX research, production Figma work, or iterative user testing.

The designers getting the most value from AI UI generation are those who pair it with a strong research and inspiration foundation. Start generating with InspoAI Creator Studio at app.inspoai.io/creator-studio and use your curated inspiration library to inform every generation.